Controller Learning using Bayesian Optimization

Figure 1

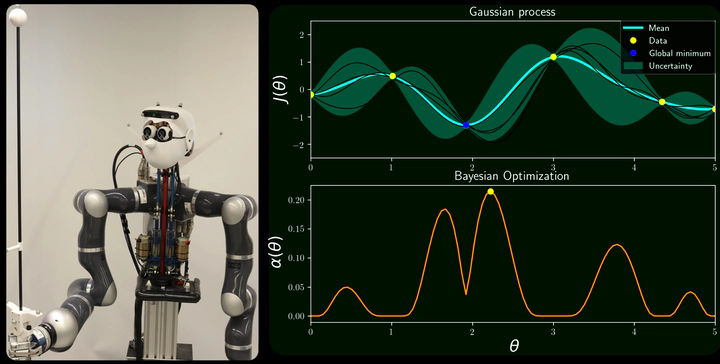

Figure 1Autonomous systems that operate in the real world are characterized by a multitude of feedback control loops operating at different time-scales in a hierarchical manner. For example, a humanoid robot that navigates in a cluttered environment is continuously sensing obstacles and replanning its path; this feedback loop operates at a top level under slow time scales. Simulataneously, at a lower level and faster time scales, the robot tracks the desired joint positions to achieve the required movements.

Designing and fine-tuning these control loops in practice typically involves large amounts of costly resources and human expertise. In order to mitigate such costs, it is desirable to tailor intelligent algorithms that allow autonomous systems to iteratively improve their control feedback loops by interacting with the real world. In my research, I leverage and adapt machine learning tools to outperform manual controller tuning while retaining safety throught the process. In the following, I describe a series of projects that demonstrate the validity and usefulness of the proposed automatic controller tuning framework.

Automatic controller tuning

Our proof-of-concept is demonstrated in [1], where a humanoid robot balancing an inverted pole automatically improves its low level control loop. Because interactions with the real world are time costly, we use Bayesian optimization to make an efficient use of the collected data. Specifically, we use Entropy Search (ES) [2], which represents the latent control objective as a Gaussian process (GP) (see Fig. 1) and suggests to try different control loop parameters that are most informative about the sought optimal behavior. Our results, summarized in the video below, outperform manual tuning by ~30% in under 20 experiments.

We have extended this framework into different directions to further improve data efficiency. When auto-tuning real complex systems (like humanoid robots), simulations of the system dynamics are typically available. They provide less accurate information than real experiments, but at a cheaper cost. Under limited experimental cost budget (i.e., experiment total time), our sim2real work [3] extends ES to include the simulator as an additional information source and automatically trade off information vs. cost. The research video below illustrates this idea.

The aforementioned auto-tuning methods model the performance objective using standard GP models, typically agnostic to the control problem. In [4], we tailor the covariance function of the GP model to the control problem at hand by incorporating its mathematical structure into the kernel design. In this way, unforeseen observations of the objective are predicted more accurately. This ultimately speeds up the convergence of the Bayesian optimizer.

Bayesian optimization provides a powerful framework for controller learning, which we have successfully applied on very different settings: humanoid robots [1], micro robots [5] and automotive industry [6]. See below a research video that applies this framework on a high-dimensional hydraulic two-legged robot performing a squatting task.

References

[1] Automatic LQR Tuning Based on Gaussian Process Global Optimization

A. Marco, P. Hennig, J. Bohg, S. Schaal, and S. Trimpe

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), May 2016, pp. 270–277

[code] [video]

[2] Entropy search for information-efficient global optimization

Hennig, P., and Schuler, C. J.

The Journal of Machine Learning Research, 2012, 13(1), pp. 1809–1837

[3] Virtual vs. Real: Trading Off Simulations and Physical Experiments in Reinforcement Learning with Bayesian Optimization

A. Marco, F. Berkenkamp, P. Hennig, A.P. Schoellig, A. Krause, S. Schaal, and S. Trimpe

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), May 2017, pp. 1557–1563

[paper] [code] [spotlight presentation] [video]

[4] On the Design of LQR Kernels for Efficient Controller Learning

A. Marco, P. Hennig, S. Schaal, and S. Trimpe

Proceedings of the 56th IEEE Annual Conference on Decision and Control (CDC), Dec. 2017, pp. 5193–5200

[paper] [presentation]

[5] Gait learning for soft microrobots controlled by light fields

A. von Rohr, S. Trimpe, A. Marco, P. Fischer, and S. Palagi

International Conference on Intelligent Robots and Systems (IROS), Oct. 2018, pp. 6199–6206

[paper]

[6] Data-efficient Auto-tuning with Bayesian Optimization: An Industrial Control Study

M. Neumann-Brosig, A. Marco, D. Schwarzmann, and S. Trimpe

IEEE Transactions on Control Systems Technology, vol. 28, no. 3, pp. 730–740, Jan 2019

[paper] [code]